Basic Patterns

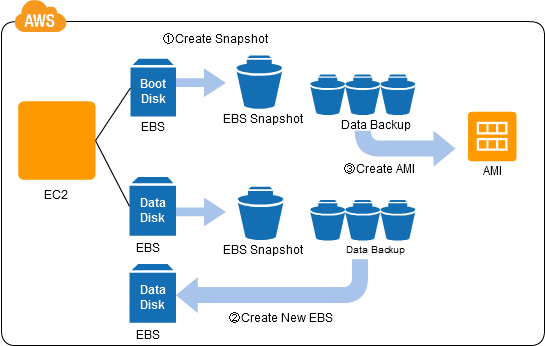

Snapshot Pattern

Why: Automate Backup of your data

How: Use AWS CLIs to create snapshots of your data on EBS (including OS). All the snapshots are copied in S3. You can use these snapshots to point in time recovery.

Implementation:

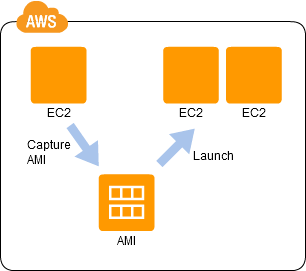

Stamp Pattern

- Why: Speed up deployment of VMs with preloaded software and configurations

- How: Create an Instance with all the software and configurations. Convert his instance to an AMI (Amazon machine images) which can be used to spin new instances in seconds.

- Implementation:

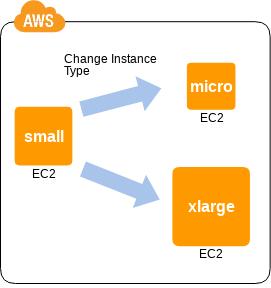

Scale Up Pattern (Vertical scaling)

- Why: Its difficult to estimate the amount of resource (CPU and Memory) needed to run an application during development. This pattern helps increasing/reducing the resources after deployment

- How: AWS allows you to switch the Instance type as needed after the deployment. However you will need to restart the instance

- Implementation:

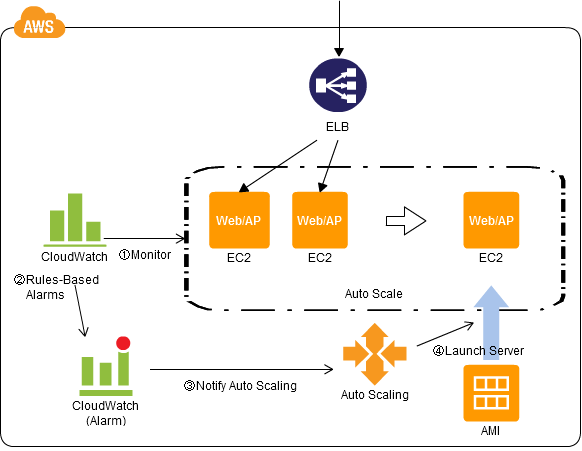

Scale Out Pattern

- Why: There is a limit to scale up and also high cost

- How: launch multiple instances and use load balancer to distribute the load across them. Use the ASG with cloudwatch alarms (monitoring) to achieve elasticity

- Implementation:

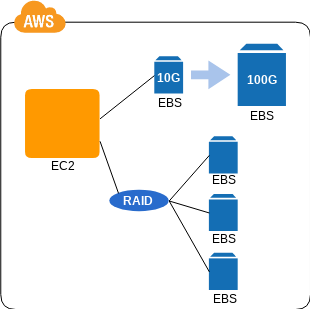

On-Demand Disk Pattern

- Why : Disk amount and I/O is unpredictable, thus its desirable to have on-demand disk capacity

- How : You can add (attach) virtual disk (EBS) when needed and for long term storage you can use S3

- Implementation:

Patterns for High Availability

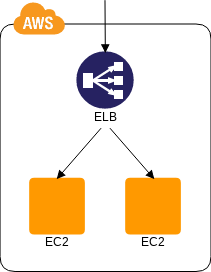

Multi-Server Pattern

- Why: Need redundancy in server, if one server fails then the customer is not affected

- How: Spin multiple instances and use ELB to distribute load. We can have active-active, active-standby, active -hot standby etc.

- Implementation:

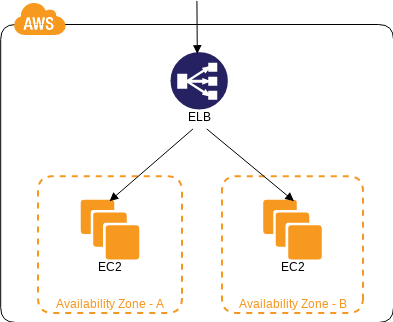

Multi-Data Center pattern

- Why: What if the whole data Center fails

- How: Create instances in multiple availability zones and use ELB to distribute across multiple Availability Zones

- Implementation:

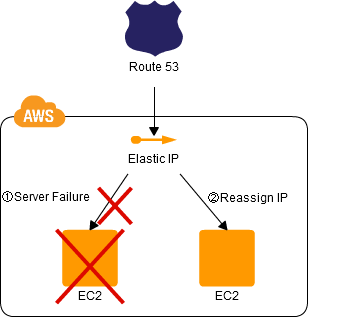

Floating-IP Pattern

- Why: To minimize downtime during an Upgrade

- How: Use elastic IP and move the IP to standby instance while the master instance is getting upgraded

- Implementation:

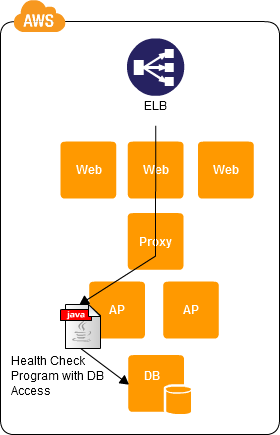

Deep Health check pattern

- Why: If an instance is experiences problem then do not send load to that system

- How: Use health checks available in ELB to setup dynamic load distribution

- Implementation:

Patterns for Processing Dynamic Content

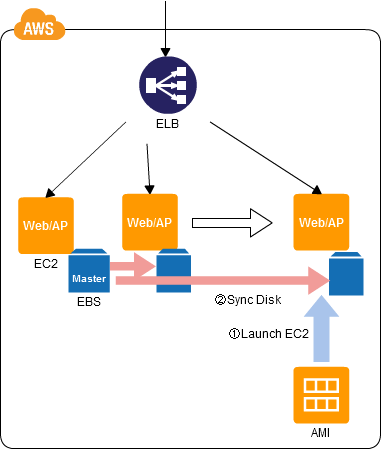

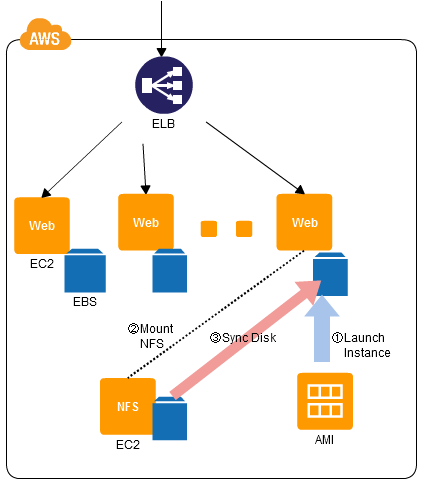

Clone-Server Pattern

- Why: the scale out structure is a common technique but often systems are not capable of handling (synchronizing) multiple instances

- How: Use ELB with AMI + ASG. Use Sync Disk to sysnchornize the EBS data

- Implementation:

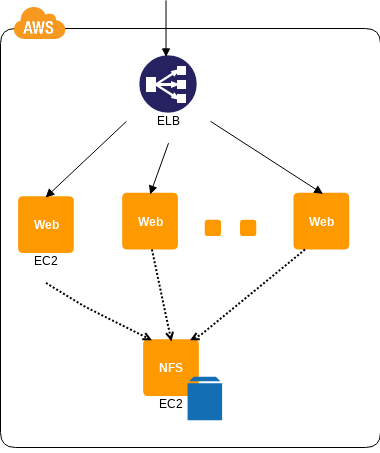

NFS Sharing Pattern

- Why: content synchronization is necessary when distributing the load across multiple servers

- How: Use NFS (network file share) where Master server will write the data and slave servers will read data from NFS

- Implementation:

NFS Replica Pattern

- Why: When using NFS across multiple servers there is performance loss and latency added.

- How: Copy the files on NFS to EBS when a new instance is created

- Implementation:

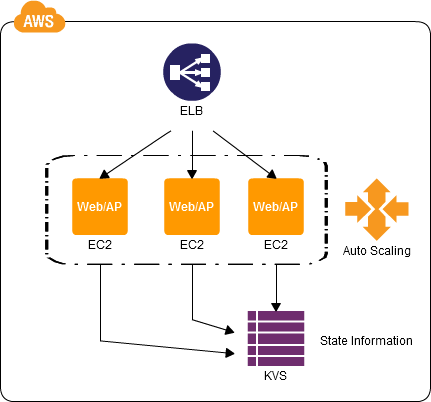

State-Sharing Pattern

- Why: Often user information is used to deliver dynamic content. The state will be lost if the user is sent to different servers

- How: The state information is stored in a highly-durable shared data store (dynamodb, simple db etc). This makes the server "stateless"

- Implementation:

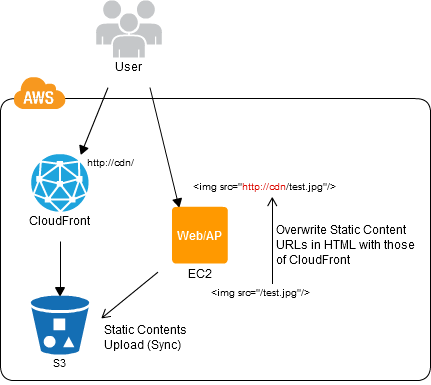

URL rewriting pattern

- Why: Most of the content delivered by the servers are static so for a peak load there is no point in scaling the instances up or out.

- How: Change the url to static content to the url to to storage or to the content delivery server. Put the static content on S3 and use cloudfront to deliver the content using presigned URLs

- Implementation:

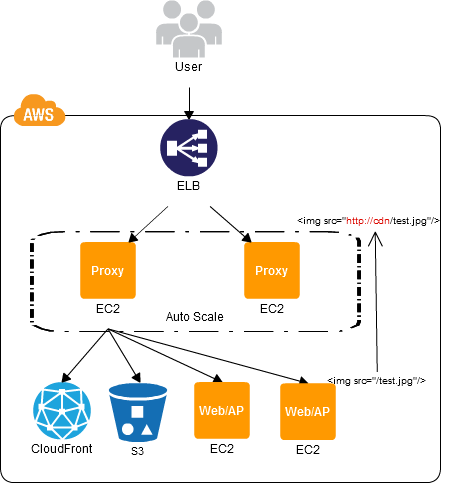

Re-write Proxy Pattern

- Why: rewriting URLs is not very efficient as it requires modifications to the existing system

- How: You can change the destination by providing proxy server. This proxy server can change the access destination of the static content to the internal storage or CDN. Eg. nginx proxy running on EC2

- Implementation:

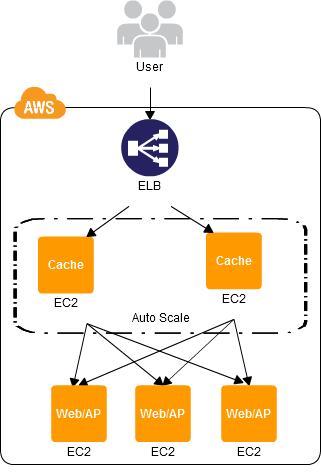

Cache Proxy Pattern

- Why: For frequently accessed static content cache them at or near proxy

- How: Add cache server with cache expiration on EC2 instance on which proxy is also running.

- Implementation:

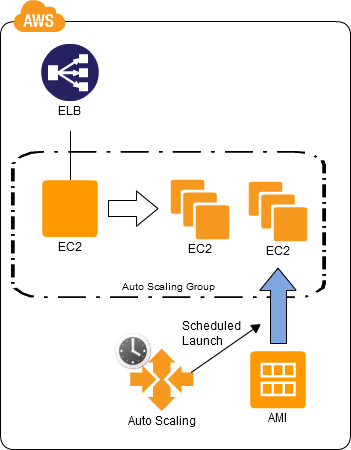

Scheduled Scale-Out Pattern

- Why: If we know when the traffic will be high then add scheduled auto-scaling group. This will pre-warmup the system for a high traffic

- How: Use ASG with cloudwatch schedule (cronjob)

- Implementation:

Patterns for Delivering Static Content

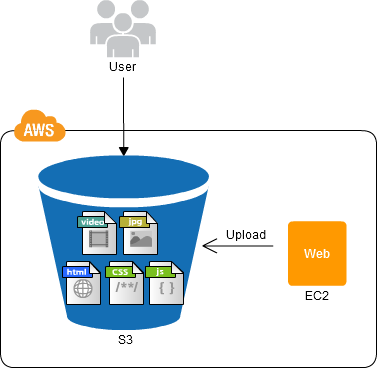

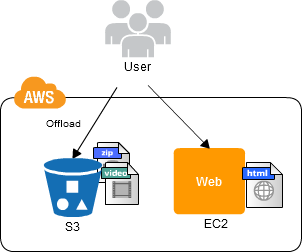

Web Storage Pattern

- Why: delivering Large files can cause too much network load to the servers. So offload the servers by allowing the content on public storage

- How: Add the large files to S3 bucket and make them public and use presigned URL

- Implementation:

Direct Hosting Pattern

- Why:

- How:

- Implementation: